GitOps

Self-hosted, zero-downtime Kubernetes… and why I ditched it nine months later.

Oracle Cloud

Ansible

Kubernetes

Flux

Preface

Nine months after this writeup I found out that my website SSL certificate had, for some reason or other, become invalid. I also found out that I couldn't recall how and where I got that working to begin with, and that the OAuth token for my backend email service failed to auto-renew, so my contact form was also broken. Afraid to peer into the depths of my own creation, I did what any self-respecting developer would do in my shoes: I rewrote the entire website from the ground up. My concluding thoughts follow the original writeup.

Summary

I set up a

CI/CDContinuous Integration /

Continuous Deployment automates testing and

releases.

pipeline using GitHub Actions to automate site updates. On each

merge to main it follows the

DockerA tool for creating containers: mini operating

systems that have instructions for how to install and run an

application, so apps run identically anywhere Docker is

installed.

build →

ship →

run methodology:

- Build a Docker image of the site

- Ship the image to GitHub's Container Registery

- Run the image on my always-free Oracle Cloud VMVirtual Machine

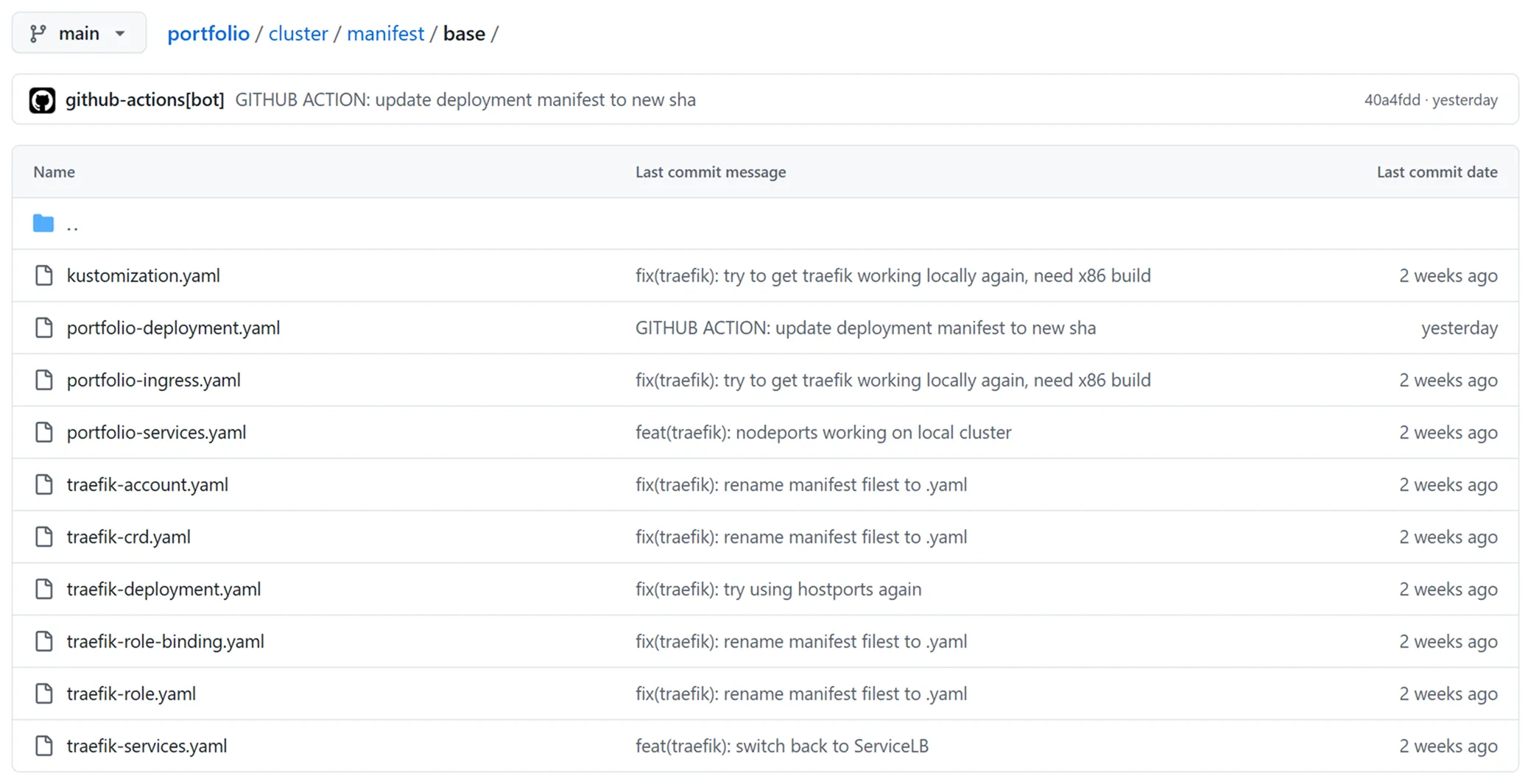

I bootstrap the VM with AnsibleAn Infrastructure as Code tool used to configure virtual machines post-provisioning., setting up KubernetesA container orchestrator that automates the deployment, scaling and rolling updates of containers. and FluxA GitOps CD tool. seen in Figure 1. Flux watches the portfolio's Git repository and syncs the cluster with its state.

Purpose & Goal

I became interested in DevOpsA software development approach that follows the three ways: increasing left to right flow of work, increasing right to left flow of feedback, cultivating a culture of continuous experimentation and learning. after reading The Phoenix Project during my 2023 summer internship at Thought Quarter. I saw this project as an opportunity to imporve my mental model of CI/CD technologies and Docker. My acceptance criteria were:

- One-click cluster bootstrap

- One-click deploy

- Zero-downtime rolling updates

To build a foundation, I completed Docker Mastery and Kubernetes Mastery by Bret Fisher on Udemy.

Spotlight

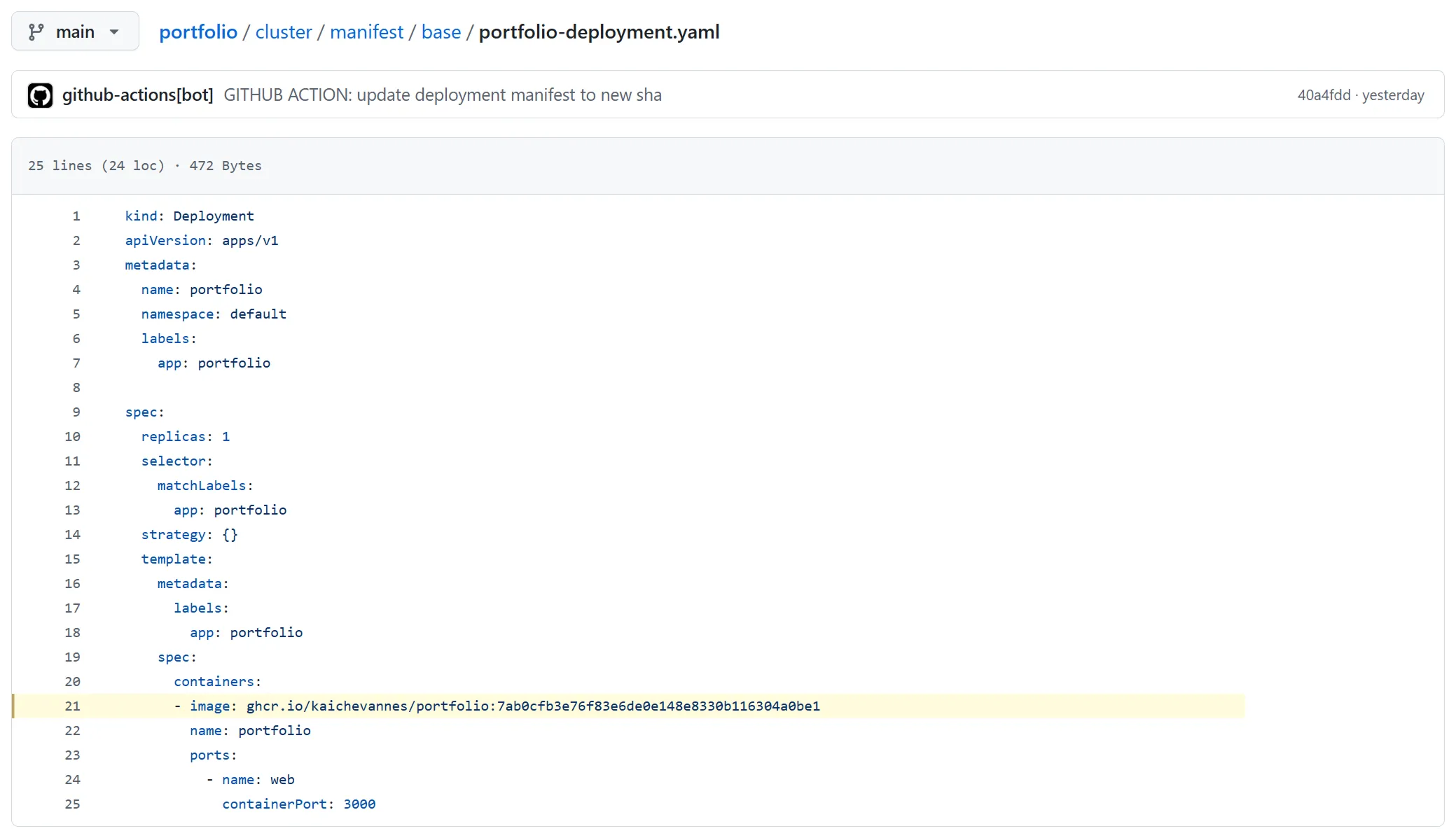

GitOps changes the run stage of build → ship → run. Instead of pushing changes to a Kubernetes cluster using a secret key, GitOps pulls the changes from source control. This eliminates the need to store high-privilege access keys, improving security. Figure 2 shows the Kubernetes manifestYAML files that store the declarative state of the cluster. files that Flux syncs the cluster state with.

One of the key benefits of GitOps is that because we store the cluster state in code, rolling back releases is the same as rolling back code. My CI/CD pipeline updates the deployment to the current commit hash which Flux detects and applies to the cluster with a Kubernetes rolling update.

Challenges

The biggest challenge in self-hosting my portfolio was the networking. To serve a request from a user that types kaichevannes.com into their browser, I need to:

- Allow incoming traffic on HTTP and HTTPS to my Oracle Cloud VM through its ingress rules

- Route incoming requests to TraefikA cloud native reverse proxy similar to nginx, designed for use with containers., running on my Kubernetes cluster

- Provide Traefik with the credentials to complete an ACMEAutomatic Certificate Management Environment protocol. It automates the retrieval and renewal of the TLS certificates we need to serve requests over HTTPS. DNS challenge with my domain registrar

- Persist the TLS certificate to avoid Let's Encrypt rate limits

- Redirect HTTP requests to HTTPS requests using a Kubernetes Ingress resource

- Forward HTTPS requests to Next.jsA full-stack meta-framework built on top of React.

In step 3, I need to provide Traefik with a secret API token for authentication. In declarative methodologies like GitOps this presents a challenge because the full cluster state is kept in source control.

In enterprise, your Kubernetes cluster is usually hosted with a cloud provider that has a built-in secret manager like AWSAmazon Web Services or AzureMicrosoft Azure. Because I'm running a K3sA minimal Kubernetes distribution built for environments with limited resources. cluster on a VM, I need to handle secrets myself.

I use Bitnami Sealed Secrets which generates a public/private key pair inside the cluster. I can encrypt the secret API token using the clusters public key and safely store it in source control. The private key to decrypt this secret only exists within the cluster.

Lessons Learned

This project taught me to slow down and and make less assumptions. For example, when configuring Traefik locally I used the built-in Kubernetes Ingress resource, but the documentation for certificate verfication used a custom IngressRoute resource. I didn't think this woud change anything so I skipped locally testing it and this led me down a rabbithole of attempting to fix what I thought was a networking issue for my broken deployment…

I tried changing my cluster configuration only to break my bootstrap script, linking Traefik directly to my VM's network using Kubernetes hostports, forwarding requests using custom iptables rules, swapping the networking backend of my cluster. I read the docs more thoroughly and realised it wasn't a networking issue but the configuration of the IngressRoute resource that I assumed would switch out one for one with my local Ingress resource.

I gained a better appreciation for documentation because the issues I was running into were difficult to Google. I also improved my understanding of working iteratively in small batch sizes; trying to put so many pieces together in one go led to such a large surface area for problems that I eventually had to take it step by step anyway. Next time, I'll start that way.

Postscript

While I'm happy that I maximised the complexity of frameworks and meta-frameworks and self-hosting and Docker and Kubernetes and zero-downtime for learning purposes, I did unfortunately end up with a system so devilishly complicated that I could no longer interact with it half a year later. For the rewrite, I went to the other extreme: vanilla HTML, vanilla CSS, vanilla JavaScript.

I simplified the code while retaining all functionality, and added enhancements like animations for tooltips closing. Features like dark mode become exceedingly complicated when you're working with SSRServer Side Rendering, hydration, and — shudders — Webpack. It's easier to manage global state in vanilla HTML. I realised that the value of web frameworks is for sites that need to handle lots of dynamic content like in e-commerce, or that are very interactive like web apps. For anything static, the basics are the best tool.